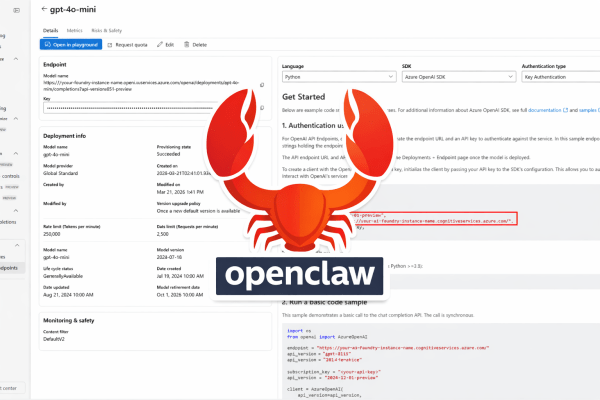

Most OpenClaw guides assume you’ll pay for OpenAI or Anthropic. But if you already have Azure credits, you can run OpenClaw for effectively free — if you know how to get Azure Foundry models working reliably. I spent hours fighting with it so you don’t have to. OpenClaw has been getting a lot of attention... Continue Reading →

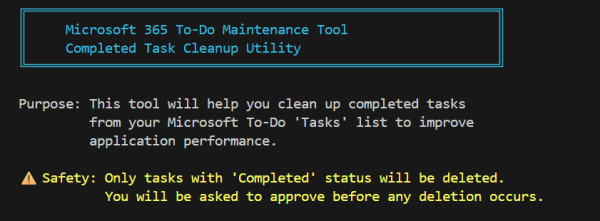

Cleaning Up Microsoft To Do: M365 To Do Maintenance Tool

Over the years, I’ve come to rely heavily on Microsoft To Do as my daily task manager. It’s simple, it syncs across devices, and it integrates nicely with the rest of Microsoft 365. But like many tools we use every day, it has its limits—and I recently ran headfirst into one. I had accumulated thousands... Continue Reading →

Oct 2025 Huge Month for Microsoft End of Support for Office and Windows

14 October 2025 is a big day when it comes to the Microsoft Office Add-ins world. It marks not only the official retirement of Windows 10, but also the end of support on by Microsoft on various other Office related products including Office 2016, Office 2019, Exchange Server 2016, Exchange Server 2019. Here's many of... Continue Reading →

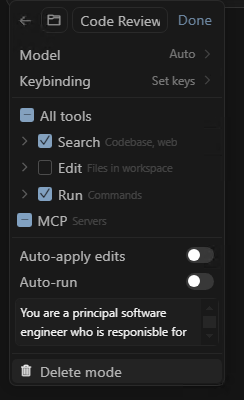

Streamline development tasks using personalised Custom Modes in Cursor

Do you have a bank of prompts you copy/paste into the Cursor chat when you want to achieve a specific task? Maybe it's when you want to do a code review, maybe it's when you want it to write unit tests for some new code, maybe it's when you what it to add OpenAPI specification... Continue Reading →

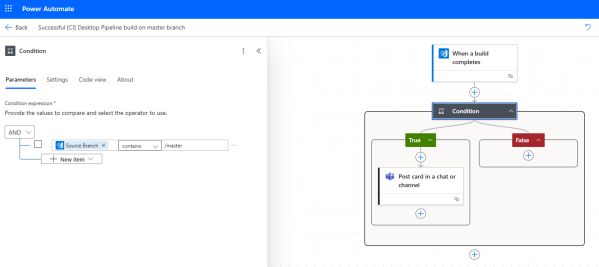

Notifying a Teams Channel on Azure DevOps Pipeline events

Azure DevOps has some built-in mechanisms to notify people on build and pipeline events. These are quite restrictive however and don't give you control over the format of the message, method of delivery (just email) or the filtering that you might want as to when to trigger the notification. For me I'm really interested in... Continue Reading →

Unlocking AI: Automating Microsoft 365 with Copilot Agents

I presented a session this week at the Digital Workplace Conference in New Zealand that landed really well according to the feedback I've received following the session. Most of the feedback revolved around the common thread that people are being bombarded with high level messaging around AI, Copilots and the benefits it could bring, the... Continue Reading →

New Outlook – Official Resources and Links

Now that New Outlook for Windows has gone into Generally Availability (1 Aug 2024) Microsoft have provided new and updated material to assist in many areas of planning and migrating users, here's a roundup of the some really useful links to official Microsoft guides and documentation. Migration Kit- Migration Guide- Frequently Asked Questions (FAQ)- Sample... Continue Reading →

How to control/block/prevent usage of New Outlook for Windows

Now that New Outlook for Windows has gone into General Availability many organisations will be looking at how they will evaluate and control the deployment/migration of users from Classic Outlook to New Outlook. Some new resources have been made available to help here: Plan your migration to new Outlook for Windows Microsoft has provided several... Continue Reading →

My Experience as a Mentor at the Microsoft Data & AI Bootcamp

As someone who has been involved in the IT industry for over 25 years, I have always believed in the power of mentorship. Recently, I had the opportunity to participate as a mentor in the Microsoft Data & AI Bootcamp. The bootcamp was designed to provide students with the knowledge, skills, and confidence they need... Continue Reading →

New Outlook for Windows is Generally Available (now a supported MS product)

Microsoft reached the General Availability (GA) milestone for New Outlook for Windows on 1 August 2024. Moving a product from Preview to General Availability means a few things in the eyes of Microsoft: Microsoft does not recommend Preview products for production use, but once it goes GA that is Microsoft saying it is now ready... Continue Reading →